When humanoid robots gently help seniors in nursing homes, when robotic welding arms execute millimeter-precise work on factory floors, and when bomb-disposal machines roll into danger zones, the scenes no longer belong to science fiction.

According to the China Development Report 2025, the country’s embodied intelligence market is on track to reach 400 billion yuan by 2030 and exceed 1 trillion yuan by 2035.

Along with rapid adoption, risks tied to these technologies are spreading just as fast—spurring China’s major insurers to enter a new frontier. Since September, companies including Ping An Property & Casualty, CPIC P/C, and PICC P/C have launched specialized insurance policies for embodied intelligence systems, hoping financial innovation can make the sector safer to scale.

These insurers are attempting to build a dual-track protection model—covering both damage to robots themselves (“body loss”) and third-party liabilities. Yet the new landscape is marked by a thicket of unresolved issues: inconsistent loss assessment standards, gaps in risk coverage, and a regulatory framework struggling to keep pace.

Insurers Push Full-Chain Protection as Robotics Scale Up

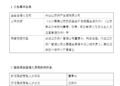

China Pacific Property Insurance (CPIC P/C) has emerged as one of the earliest movers. Its Ningbo branch launched a “Smart Protection” policy that covers the entire chain—from manufacturing and sales to leasing and usage. The model centers on “humanized risk assessment,” which simulates human behavior patterns to evaluate mechanical risk exposure.

The policy bundles coverage for robot body damage, third-party liability, and property loss, and offers scenario-specific clauses for accidents such as welding-arm malfunctions and rescue-robot falls. Importantly, it provides daily, weekly, and monthly pricing options—an attempt to adapt to surging robot leasing and the short-term needs of small and medium-sized businesses.

“This type of flexible protection directly targets core industry bottlenecks,” said Wang Peng, associate researcher at the Beijing Academy of Social Sciences. But he noted that pricing still lags behind the reality of robot usage: “Current models don’t sufficiently factor in usage frequency, making premiums artificially low. A usage-based insurance model is necessary for real-time adjustments.”

PICC P/C, meanwhile, introduced a dual-product package combining body loss insurance with third-party liability insurance. In addition to traditional risks like collisions and typhoons, PICC’s policies now cover system crashes stemming from cyberattacks and algorithmic faults—a first for China’s robot insurance segment.

In November, PICC’s Suzhou branch issued Jiangsu Province’s first third-party liability policy for embodied intelligence, with coverage up to 1 million yuan, focusing on delivery-robot accidents that may lead to medical compensation claims.

Yet despite these experiments, insurers acknowledge they still lack consistent loss-assessment protocols—especially in environments where risks occur frequently, such as industrial welding, logistics robotics, and search-and-rescue operations.

The difficulty of attributing robot failures poses one of the industry’s biggest challenges. A robotic arm’s sensor failure could be caused by operator error or by an algorithm defect. Disentangling the two is often impossible without deep access to the robot’s internal data.

Currently, insurers are working closely with manufacturers, forming joint assessment teams to accelerate claims processing. A recent insurance package launched in Hangzhou—the “Trinity” plan—adds layers of technical analysis and legal consultation to help resolve disputes. But these efforts remain piecemeal, and a national standard has yet to emerge.

Damage assessment is further complicated by the hybrid nature of embodied intelligence, where mechanical components and software are intertwined. Failures often involve multi-step causal chains triggered by both hardware degradation and algorithmic misjudgment.

One robotics industry veteran said the field urgently needs shared risk databases. “Insurers must build real-time data-sharing systems with manufacturers,” he said. “Claims can’t remain purely retrospective. Prevention must become part of the framework.”

Wang Peng echoed this point, recommending a two-part process—“data extraction plus third-party appraisal”—to standardize evaluations and reduce disputes over algorithm-related faults.

Human-Robot Interaction Creates New Gaps: Ethics, Privacy, and Accountability

Insurance products today still struggle to address the complexities of human-robot interaction. The risks extend far beyond mechanical failures, touching on ethics, emotions, privacy, and medical decisions.

Consider eldercare robots: if a navigation error causes an older adult to fall, who is responsible? The maker? The operator? The insurer? Policies today treat such incidents inconsistently, and their terms lack clarity.

“Most liability insurance is limited to physical injuries,” Wang said. “It doesn’t cover ethical risks—algorithmic discrimination, emotional harm, privacy leaks. These are increasingly common.”

Insurers are trying to reduce these blind spots. Ping An’s “Comprehensive Financial Solution” includes risk protection for data-compliance issues tied to R&D, offering legal services for cross-border data processing—critical for companies developing humanoid robots with cloud-connected systems.

Still, quantifying ethical harm remains nearly impossible. Insurers say they need collaboration from academics, robotics experts, and regulators to build robust assessment models.

As robots become more autonomous—and more connected—the question of accountability grows urgent. If a robot loses control due to a cyberattack, should it be classified as an equipment failure or a liability incident? Policies from major insurers vary widely.

CPIC includes “abnormal operation” as an insurable risk; PICC explicitly excludes losses caused by “intentional malicious manipulation.” These inconsistencies, combined with fragmented regulation, have created what some executives call a “responsibility vacuum.”

Wang argued for a top-down regulatory architecture: “We need unified standards to define triggers and compensation thresholds for cybersecurity breaches and algorithm anomalies. And we need dual responsibility lines to determine who pays.”

He suggested borrowing from the EU’s tiered regulatory model and setting differentiated capital requirements for insurers operating in high-risk robot categories.

Industry insiders emphasize that embodied intelligence has already become part of national strategy, calling for the National Financial Regulatory Administration to work with the Ministry of Industry and Information Technology to build integrated rules.

They say the country urgently needs three types of standards:

-

Risk-definition standards for algorithm anomalies, data breaches, and cyberattacks.

-

Technical assessment protocols for system failures—from sensor burnout to software miscalibration.

-

Solvency requirements to prevent insurance firms from being overwhelmed by claims in a fast-moving, high-uncertainty sector.

Local governments are beginning to experiment. In Hangzhou’s Binjiang district, the Embodied Intelligence Industry Alliance has created standardized contracts between insurers and robotics firms. These agreements clearly define responsibilities—manufacturers handle technical defects, while users assume liability for improper operations—offering one possible blueprint for a maturing market.

As embodied intelligence becomes a core element of China’s future industrial system, insurers face both a risk and an opportunity: the chance to build a new financial safety net for machines that are increasingly acting alongside humans.

更多精彩内容,关注钛媒体微信号 (ID:taimeiti),或者下载钛媒体 App